I was tasked with making some changes to a site today over FTP. It seems odd that people are still OK with letting developers push and pull files from FTP without so much as a change log or automated linting, testing, etc. Anywho, I tried to find my cowboy hat but it snowed yesterday so all my summer gear is put away and since it’s moderately inappropriate to do cowboy things while looking like a snowboarder, I had to come up with a better way to make working on files over FTP less Wild West and more Gnar Gnar.

TL;DR

- Setup a cron script to maintain a local mirror of the remote FTP using lftp and automatically commit the changes to a hosted git repo.

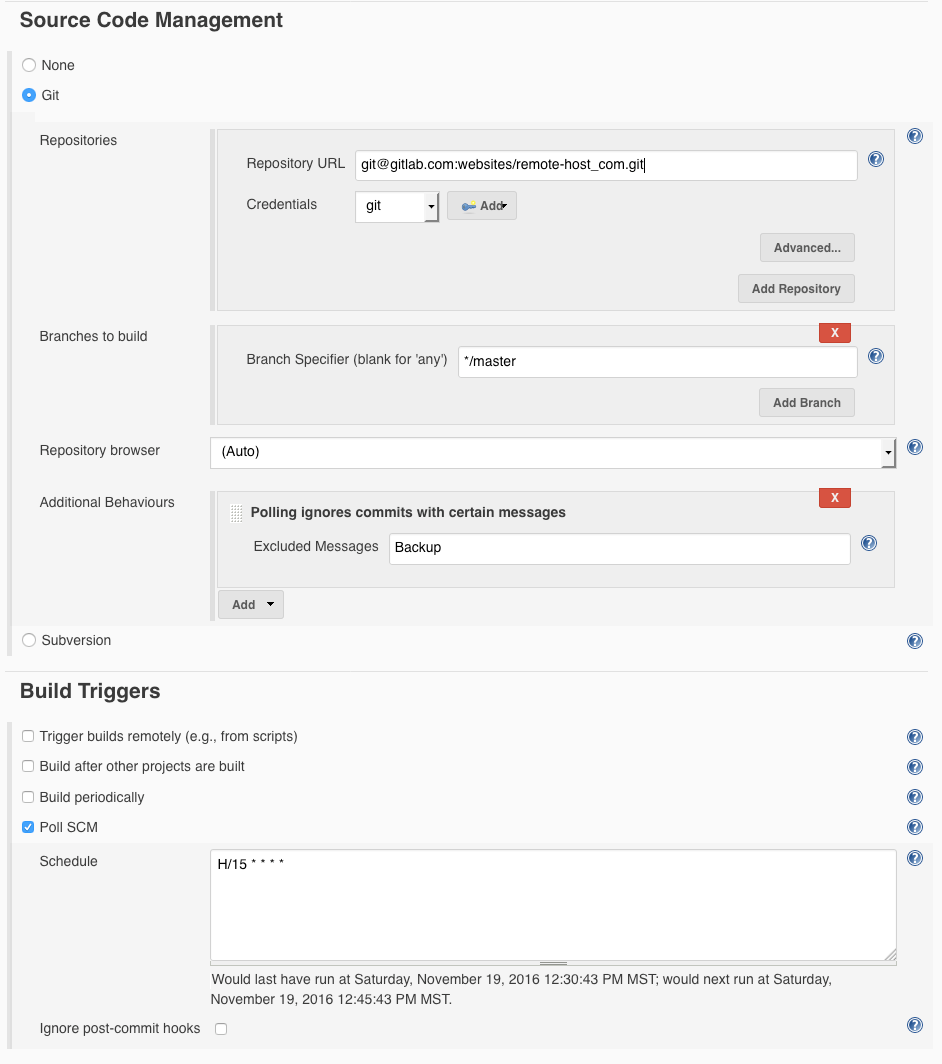

- Setup a project in Jenkins to monitor the git repo for changes

- Ignore the commits created by the cron task mirror script

- Lint the project

- Execute a reverse mirror lftp script to push the local changes to the remote FTP host also deleting the files no longer relevant.

The intermediary

I do what I can to avoid manually interacting with production servers in an administrative capacity after they are setup and running so I have an extra laptop that sits quietly in the corner of my office acting as the intermediary. Currently called Cato, affectionately name after a bad-ass butler. Not so coincidentally, Cato runs Jenkins. All of the cron jobs and Jenkins projects run on Cato so anyone on my team can trigger deployments and work on the same projects and so on. I don’t think it makes a lot of sense to run any of this on a workstation however it can be done. Using Jenkins in this instance is actually quite a bit of fluff so you can even chop it out if you’d like. It’s far from necessary but convenient never the less.

Automatically update a Git repo with changes from the remote FTP host

Unfortunately, the website is an instance of WordPress so files change on the remote FTP host and need to be automatically brought in and committed to the Git repo. To do this, install lftp

sudo apt install lftp

Creat a config file called ~/.netrc to store the FTP login credentials.

machine ftp.remote-host.com login mySuperSweetUsername password mySuperSweetPassword

Create a folder to store the backup dataset in and make sure the appropriate user owns it.

sudo mkdir /Backups sudo mkdir /Backups/remote-host.com sudo chown -R jenkins:jenkins /Backups

Create an lftp script /Backups/mirror_remote-host.com.lftp to mirror the remote host to the local backup folder.

set ftp:list-options -a set cmd:fail-exit true open ftp.remote-host.com mirror --verbose --delete --exclude .git/ --exclude deploy.lftp --recursion=always

Create a shell script /Backups/mirror_remote-host.com.sh to invoke lftp and git to push the changes to our remote repo. One noteworthy mention here is that the commit message for this automatic sync is “Backup”. To keep everything super simple, we’ll later setup our deploy job to ignore this commit message.

#!/bin/bash cd /Backups/remote-host.com # Update our repo to the latest snapshot git pull # Pull in changes lftp -f /Backups/mirror_remote-host.com.lftp # Commit changes and push git add --all git commit -m "Backup" git push origin master

Make the bash script executable and run it.

chmod +x /Backups/mirror_remote-host.com.sh ./Backups/mirror_remote-host.com.sh

If you followed this to the step then the git repo probably wasn’t setup before hand but now you’ll have a local copy of the remote FTP host so it would be appropriate to initialize the git repo, setup a centralized git repo (bitbucket and gitlab work great for free for private repos) and connect the dots. You’ll probably also want to setup ssh key pairs on your git repo host although I won’t be covering that here.

cd /Backups/remote-host.com git init git add --all git commit -m "init" git remote add origin ... git push origin --all

Now, if you run the mirror shell script again, it won’t complain. I doubt anything changes, unless you changed a file on your remote FTP host. You could even give it a try by putting a new file on your remote FTP host and running the mirror shell script. That new file should end up in your git repo when the script is done running. After you’re convinced the shell script is working, toss it in to cron. I grab a snapshot of the remote host once a day at 2:AM in my time zone.

0 2 * * * /Backups/mirror_remote-host.com.sh

And there you have it, our hosted Git repo will stay in sync with our remote FTP host on our predefined schedule unless someone changes credentials on ya.

The Deploy Script

Create the lftp deploy script. I put this script in the root of the git repo /deploy.lftp and commit it so that anyone with the FTP credentials can use it. If I find my cowboy hat, this lets me grab the bull by the horns and manually run the deploy sync from my workstation. It also makes it easy move Jenkins or the mirror process to different machines. When mirroring, I exclude the .git folder and the deploy.lftp script so they don’t get published. I also add the –ignore-time argument since a fresh clone of a remote repo will set all the files to a creation date of now. This caused all the files to get uploaded every time for me. Adding the –ignore-time seems to work fairly effectively as I added a single blank space to a file and the modified file (and only that file) was deployed as expected.

set ftp:list-options -a set cmd:fail-exit true open ftp.remote-host.com mirror --reverse --verbose --delete --exclude .git/ --exclude deploy.lftp --ignore-time --recursion=always

From the root of the git repo, you should now be able to use this to deploy changes. You can give it a try right now to watch lftp scan through the folders. If you haven’t changed anything locally then it won’t deploy anything but, that’s the point. Alternatively, you could modify a file in the git repo and even delete one; then run run the lftp command to watch the changes deploy.

lftp -f deploy.lftp

Remember, you’ll need the ~/.netrc config file with FTP login credentials to use the above lftp script.

Using Jenkins to deploy changes from Git to our FTP host

Create a new Jenkins build, configure the remote git repo ignoring commits with the message “Backup” and trigger the build by polling the SCM. You could trigger the build remotely with a webhook but I tend to poll the repo (every 15 minutes) since most of my Jenkins instances are not accessible from outside the local network they reside on.

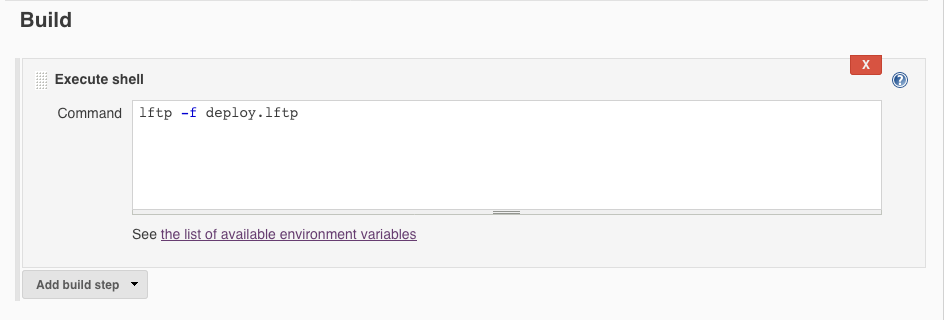

If your Jenkins user has the ~/.netrc config file in place with the correct FTP credentials, you can then setup a build action (or post build action) to execute the lftp deploy script.

If your Jenkins user has the ~/.netrc config file in place with the correct FTP credentials, you can then setup a build action (or post build action) to execute the lftp deploy script.

Technically, that’s all you need. You can now manually trigger the build and watch the console to see it run through the motions. Alternatively you could push a change to your hosted git repo, wait a couple minutes watch the job trigger on its schedule.

Technically, that’s all you need. You can now manually trigger the build and watch the console to see it run through the motions. Alternatively you could push a change to your hosted git repo, wait a couple minutes watch the job trigger on its schedule.

I have to toss in a disclaimer here. I’m absolutely against setting up continuous deployments without having a true build process (including tests) in place to ensure that what you are deploying is a legitimate code base. I do however feel that the point of this post is to outline the technical specifics of wrapping a changing FTP host with a continuous deployment using Jenkins so I’m not going to further dilute this already long post with any unnecessary extras or language & project specific conventions. Now, despite having just written that, I’m going to strongly suggest that you at least implement a build process that at least lints your code base and tests the most mission critical elements in some way. I also suggest having Jenkins notify someone who can deal with problems when the build deploys.

Problems with this approach

The biggest issue is likely the fact that any files modified on the server between the mirror snapshots being made will be lost when the deploy is triggered. You can use multiple threads when mirroring remote FTP host so that it can be performed quickly and reduce the window of time this error can happen between by running the mirror snapshot script at short intervals.

The inverse of the previously stated issue exists as well. If you merge a commit to the master branch of the git repo and the mirror script runs before Jenkins picks up the deploy, then the changes merged in to master will be reset by the mirror script. This issue isn’t as bad as the previous since the files don’t actually get deleted, they just get buried in a commit. Never the less, it’s an issue that could gimp a deployment.

You could overcome both of these problems by creating a more thorough build process in Jenkins that grabs the latest mirror from the remote host and alerts the tribe if changes are present and attempts to merge the two change sets before deploying. If I find myself doing that, I’ll outline the process and results here.

Additional Resources

While putting the above scripts and this outline together, I found these resources useful:

GIT-FTP https://github.com/git-ftp/git-ftp